TL;DR

- Product DNA analysis classifies the structural reality of the product before the team chooses growth, pricing, trial, or GTM playbooks.

- The analysis is not just "what kind of product are we?" It is how value delivery, topology, buyer map, activation, moat, expansion, and positioning interact.

- The output is a clearer strategic profile, a contradiction map, and a shorter list of strategies that actually fit.

- Teams usually get the most value when the analysis resolves disagreements that have already started showing up across product, sales, pricing, and marketing.

Most SaaS strategy debates are framed too late. The team is already arguing about PLG, pricing, onboarding, or repositioning before it has agreed on what the product structurally is.

That is why so many strategy choices feel plausible in isolation and destructive in practice:

- One person is reasoning from user delight

- Another from buyer behavior

- Another from revenue targets

- Another from the last company they admired

All of them may sound reasonable. All of them can still be wrong for the current product.

The real value is constraint. Once the product is classified honestly, half the strategic options fall away. The company stops debating fantasy versions of itself and starts designing around the version customers are actually experiencing.

"The point of Product DNA analysis is not to label the product beautifully. It is to stop the business from choosing strategies that the product has no structural chance of supporting."

— Jake McMahon, ProductQuant

What Product DNA Analysis Includes

At ProductQuant, the analysis is broader than one framework table. It is a structured read of the product system and the strategic tensions inside it.

1. Structural classification across the core dimensions

The starting layer is classification. What kind of value does the product deliver? Is it a workflow tool, a system of record, an intelligence layer, an automation platform? Is value single-player, multiplayer, network-based, or multi-stakeholder? Does the buyer match the user or sit above them?

These are not academic categories. They shape what the product can realistically support in pricing, activation, GTM, and expansion.

A workflow tool with single-player value can support frictionless self-serve. A system of record with team-dependent activation cannot — no matter how much the growth team wants it to.

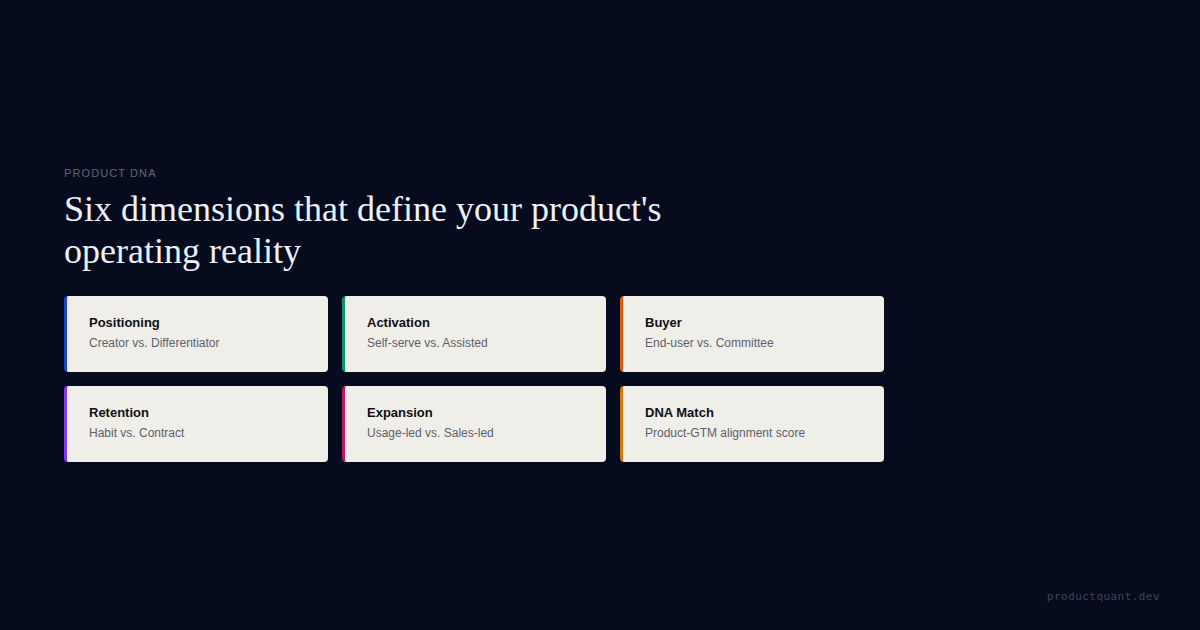

At ProductQuant, we classify across 6 core dimensions:

- Competitive positioning — category creator, differentiator, niche specialist, or disruptor

- Complexity — how much setup and expertise the product requires

- Activation pattern — fast-value, phased, or team-dependent

- Growth motion — PLG, sales-led, or hybrid

- Expansion model — seat-based, usage-based, module, tier, or cross-sell

- Retention moat type — data, workflow, network, or switching cost

Each dimension constrains what the others can do.

The classification step usually takes the most time not because the categories are unclear, but because the team resists the answer. A company that sees itself as a category creator often turns out to be a differentiator. A company that wants PLG often has team-dependent activation. The classification layer forces the conversation the strategy meeting has been avoiding.

The cost of getting this wrong is measurable:

- Bessemer's Cloud 100 analysis shows that companies at the $5M–$20M ARR stage face fundamentally different growth dynamics than earlier-stage companies

- ChartMogul's analysis of 1,000+ SaaS companies confirms that progression odds change dramatically at each milestone — the companies that reach $5M ARR in under 24 months have a significantly different path than those that take 36+ months

The difference is usually structural, not effort-based.

2. The activation and value path

Classification alone is not enough. Value has to become real somehow. Some products deliver it in minutes. Others need data, setup, approvals, or collaboration first. That difference changes everything: trials, onboarding, the viability of self-serve.

The critical question is not "how fast can we get users to value?"

It is "what does value actually require before it exists?"

A single-player analytics tool delivers value the moment a user connects one data source and sees one chart. A multiplayer collaboration tool delivers nothing until at least 3 people have joined and started interacting. The first product can support self-serve trials. The second needs guided onboarding and probably a sales touch.

We map activation across 3 types:

- Fast-value — value exists within the first session

- Phased — value arrives in stages as the user completes setup milestones

- Team-dependent — value only exists when multiple people or systems participate

Most strategy mistakes happen when a team designs a fast-value onboarding flow for a team-dependent product.

The result looks like an activation problem. It is actually a DNA mismatch.

In B2B SaaS companies with committee buying and guided activation, the average time from first touch to paid conversion is often 3-6 weeks. Not because the product is poorly designed. Because the activation path includes steps the product cannot compress: security reviews, procurement approvals, data migration, stakeholder alignment.

Treating this as a "conversion optimization problem" burns engineering time that should go into onboarding automation instead.

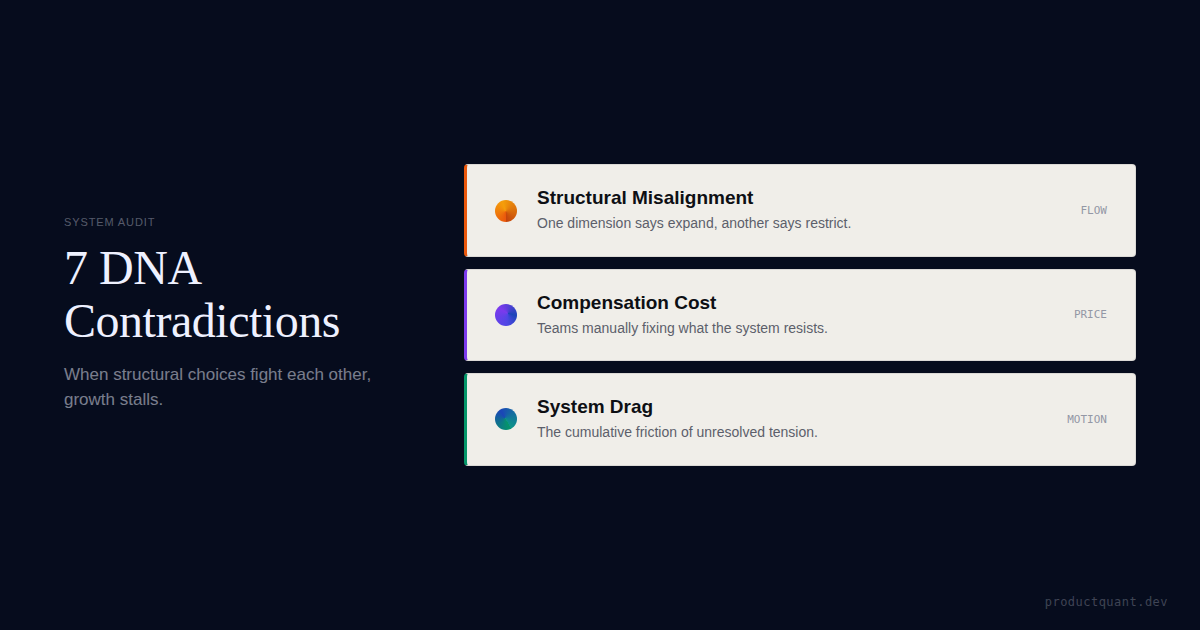

3. The contradiction map

Contradictions appear when one structural choice fights another:

- Freemium with team-dependent activation

- PLG with multi-level buying

- Per-seat pricing with single-player value

The team experiences these as "growth problems." They are really unresolved design tensions.

In ProductQuant's work across B2B SaaS companies, the 3 most common contradiction patterns are:

Self-serve pricing with sales-led activation. The pricing page invites users to start free, but the product requires multi-stakeholder setup that no single user can complete alone. Free signups look healthy. Paid conversion is flat.

PLG motion with committee buying. The product is designed for bottom-up adoption, but the actual buyer is a VP who requires security reviews, ROI justification, and procurement involvement. The product gets used by teams who did not choose it. The buyer who chose it cannot see the value without a dashboard they do not have.

Per-seat pricing with single-player value. The product creates value for one user, but charges per seat. One power user invites the team — and each invite costs money. Expansion stalls because the buyer has no incentive to grow usage.

Teams that have not classified their product DNA treat these contradictions as isolated problems: "we need a better free-to-paid funnel," "we need to improve our PLG metrics," "we need to optimize pricing."

Each fix makes the underlying tension worse. The contradiction is not in the execution. It is in the structural design.

The most common contradiction we find is between the expansion model the product actually supports and the one the board expects. A seat-based product gets told to hit 130% net dollar retention. Seat-based expansion has a natural ceiling around 105-115% NDR because it is constrained by headcount growth, not usage growth.

The gap between the board target and the structural ceiling is not an execution problem. It is a classification problem.

Want the lightweight version first?

Start with the self-audit if you want a fast read before doing the deeper analysis. The full Product DNA analysis goes much further into contradictions, outputs, and priority decisions.

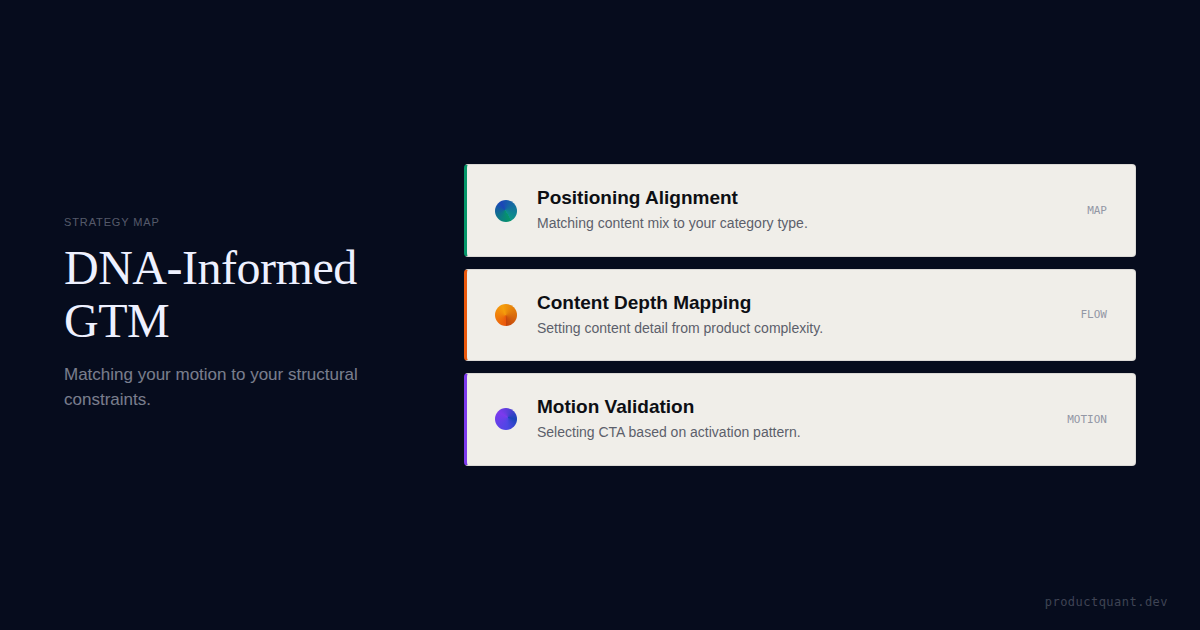

4. The strategy implications layer

The purpose is not classification for its own sake. It is to change real decisions.

Once the structural profile is clear, the downstream choices get easier: Which motion fits? What pricing models are plausible? What kind of content does the market need? What should sales-assist own? Which benchmark examples are structurally misleading?

This is where the analysis connects to the work:

- A differentiator with phased activation and committee buying needs case studies, ROI frameworks, security documentation, and a sales-assist motion

- A category creator with fast-value activation and single-player topology needs viral loops, self-serve onboarding, and content that educates a market that does not yet know the category exists

Same product category. Completely different playbook.

The strategy implications layer also surfaces which benchmark comparisons are dangerous. A team with team-dependent activation should not be comparing its free-to-paid conversion to a product with single-player value. The comparison is not just unfair — it is structurally invalid.

The right comparison set is products with similar activation paths, not products with similar category labels.

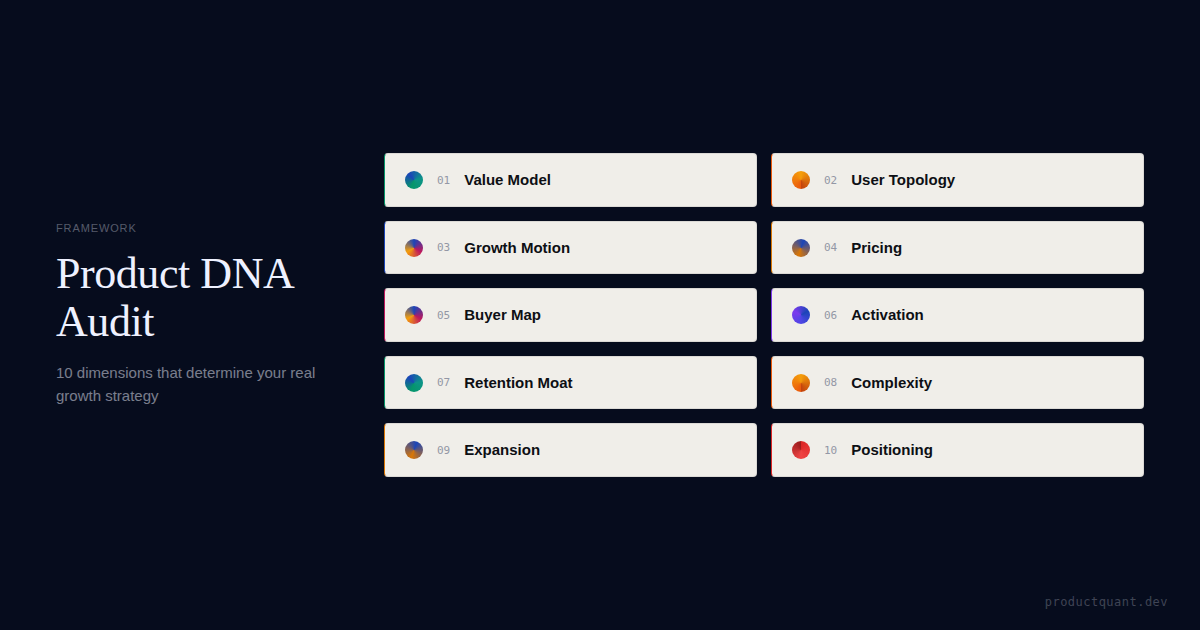

5. The output package

A good analysis should end with more than observations. It should produce:

- A 6-dimension classification profile — one row per dimension

- A contradiction map — 3-7 active tensions ranked by impact

- A strategy implication summary — what fits, what does not, and why

- A benchmark comparison set — which companies are structurally similar and which are misleading

- A priority action list — 2-4 changes ranked by impact and effort

The analysis usually takes 4-6 weeks to complete, depending on product complexity and data availability.

Otherwise it stays interesting. And underused.

What the Output Usually Changes

The clearest sign that a Product DNA analysis is working is that it changes the quality of downstream decisions almost immediately.

It changes what the team stops debating

Many teams discover that they have been running debates that should never have stayed open this long:

- Should we go more PLG?

- Should we add a free tier?

- Should we price per seat?

- Should we reposition around a category we do not actually inhabit?

Once the DNA is clearer, some of those questions stop being strategic options and start being obvious misfits.

It changes what "good execution" even means

If a product has committee buying, guided activation, and a buyer-user split, then "good execution" does not mean copying a frictionless self-serve motion from a single-player product.

It means building a system that respects how this product actually gets bought and proves value.

It changes cross-functional alignment

The analysis often resolves disagreements that were being fought through proxies:

- Product says the trial is too short

- Sales says the buyer needs more proof

- Pricing says the current model cannot expand

- Marketing says the content is too generic

Those can all be fragments of the same DNA mismatch. Not separate opinions.

The value comes from shrinking the decision set. Once the product is classified honestly, many seductive but misaligned strategies become easier to rule out.

Why borrowed playbooks fail

One of the most consistent patterns in ProductQuant's work is the borrowed playbook trap. A company sees another SaaS business doing something that works — freemium, PLG, usage-based pricing — and copies the motion without checking whether their own product has the DNA to carry it.

This is not a critique of imitation. It is a structural argument.

A freemium model requires single-player value, fast activation, and a clear upgrade trigger. If the product needs team setup before value exists, freemium becomes a cost center — not a growth engine.

A usage-based pricing model requires measurable, frequent, and variable usage events. If the product is used a few times per week with stable patterns, usage-based pricing will feel unpredictable to buyers. And unpredictable to revenue forecasting.

The cost of this mismatch is not just wasted engineering time. It is strategic drift — the company moves further from what its product actually does well while explaining away the gap as "we just need to execute better."

Execution is not the problem. Classification is.

What changes after: a pattern from the work

In one engagement, a B2B SaaS company at $8M ARR was running a PLG motion with team-dependent activation and committee buying:

- The growth team was optimizing free-to-paid conversion. Conversion stayed flat.

- The sales team was frustrated because the best users were not the buyers. No alignment on who to target.

- Marketing was frustrated because the content that educated the market was too deep for top-of-funnel metrics. Content was right — audience was wrong.

After the DNA analysis classified the product honestly — differentiator positioning, phased activation, committee buying, hybrid motion, module expansion — the strategy shifted:

- Instead of optimizing self-serve conversion, the company built a sales-assist motion that used product usage data to identify qualified accounts

- Created ROI dashboards for the economic buyer

- Redesigned the content strategy around committee education rather than individual user activation

Within 2 quarters, the sales cycle shortened. Not because the product changed. Because the motion finally matched the product's actual buying structure instead of fighting it.

| Analysis layer | Question it answers | What changes after |

|---|---|---|

| Structural classification | What kind of product are we actually operating? | The strategy set gets narrower and cleaner |

| Activation / value path | How does value become real? | Trial, onboarding, and sales-assist design improve |

| Contradiction mapping | Where are two parts of the system fighting? | The team stops treating structural drag as local execution failure |

| Strategy implications | Which motions and models actually fit? | Roadmap, pricing, GTM, and content become more coherent |

The analysis gets sharper when the contradictions are visible

If the company already feels pulled between pricing, PLG, buyer complexity, and GTM expectations, the contradiction map is usually the fastest place to look next.

What to Do Instead

If your team is still choosing strategy before it has classified the product honestly, reset the order of operations.

- Start with the current product, not the aspirational one — Classify how the product actually gets bought, activated, and expanded today.

- Map the contradictions before fixing the funnel — If several functions are all "kind of right," the problem may be structural rather than local.

- Use the output to eliminate misfit strategies — The analysis is most valuable when it removes options, not when it produces another broad list of possibilities.

- Turn the analysis into next-quarter decisions — Pricing, activation, GTM, and content should all reflect the classified product rather than generic SaaS advice.

The practical benefit is speed. Once the product is classified clearly, the company spends less time negotiating against reality and more time building around it.

FAQ

How is Product DNA analysis different from a Product DNA audit?

The audit is the lighter entry point. It helps a team classify itself quickly. The full analysis goes deeper into contradictions, implications, and the specific strategic choices the product can and cannot support.

Is Product DNA analysis just positioning work?

No. Positioning is one output area. The analysis also covers value delivery, topology, activation pattern, buyer-user structure, moat type, expansion model, and the strategic tensions between them.

When should a company do this analysis?

Usually when strategy debates keep recurring, when the company is moving upmarket, when pricing or PLG decisions feel unstable, or when multiple functions are solving the same growth problem from conflicting assumptions.

What is the clearest sign the analysis is needed?

If the team keeps borrowing playbooks from companies with very different products and then explaining away the mismatch as execution, that is usually the signal. The classification layer is missing.

Sources

- Bessemer Venture Partners: Cloud 100 Benchmarks Report — growth dynamics at $5M–$20M ARR

- ChartMogul: The Odds of Making It — ARR milestone progression data across 1,000+ SaaS companies

- Rework: SaaS Growth Stages — growth stage definitions and transition challenges

- ProductQuant: Product DNA Framework

- ProductQuant: Run a Product DNA Audit in 10 Minutes

Classify the product before borrowing the next playbook.

If pricing, PLG, GTM, and activation debates keep cycling without resolution, the missing layer is usually the structural read underneath them.