TL;DR

- Gartner predicts that through 2026, organizations will abandon 60% of AI projects because they lack AI-ready data foundations, not because the algorithms are wrong. Not because the model architecture is flawed. Because the data was never there to support it.

- Being AI-ready means having data aligned to specific use cases, governed at the asset level, with automated pipelines, live metadata, and continuous quality assurance. Not just a warehouse and a dashboard.

- Approximately 70% of AI projects fail to move from pilot to production, with data and readiness failure as the root cause across nearly every case. The pattern is consistent across industries and company sizes.

- Organizations with AI-ready data reach production in 10 to 14 weeks. Teams starting from an unprepared foundation take 6 to 18 months. The difference is knowing what you have before you start building.

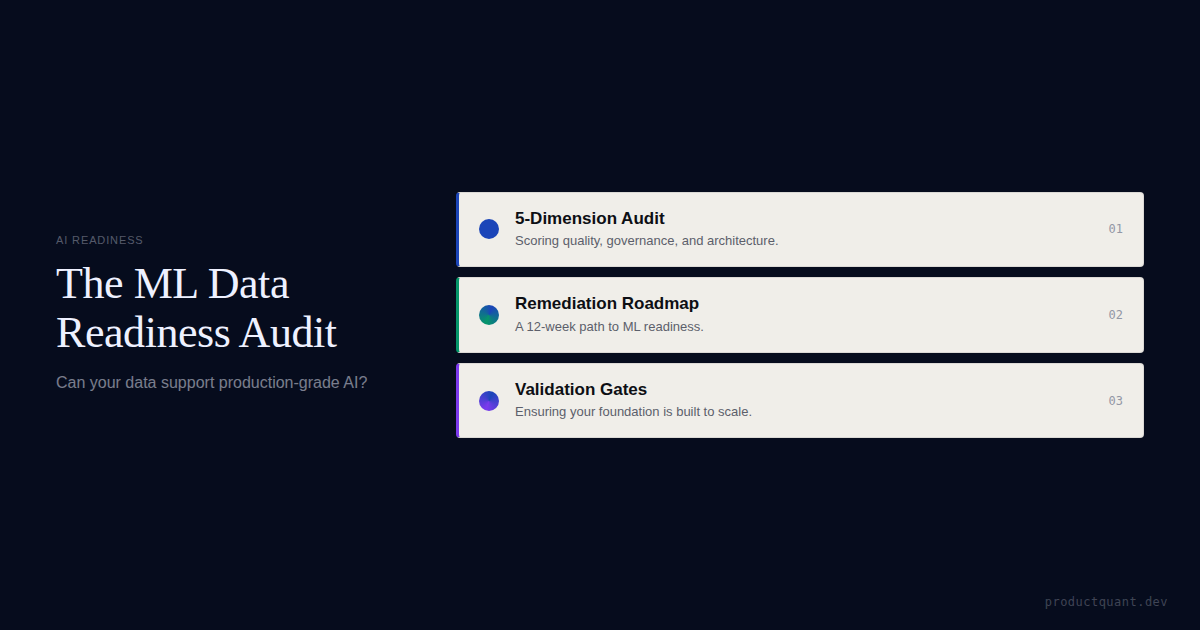

- The audit scores 5 dimensions from 1 to 5: Data Quality, Data Governance, Data Architecture, Infrastructure, and Data Culture. Level 3 is the minimum for production.

- Scoring below Level 3 means you remediate first. Teams that discover data gaps mid-build scrap 46% of their proof-of-concept projects entirely.

The 60% Abandonment Rate

6 out of 10. Those are the odds against your AI project if your data is not ready.

You have 10 AI features on your product roadmap. Before you commit engineering resources to any of them, you need to understand why 6 of those 10 will likely be abandoned.

Through 2026, organizations will abandon 60% of AI projects that lack AI-ready data. Not because the model architecture is wrong. Not because your engineers lack talent. Because the data foundation was never there to begin with. Per Gartner, this is the single largest predictor of AI project failure across all industries.

of AI projects lacking AI-ready data will be abandoned through 2026. The median time to failure: 13.7 months of wasted engineering effort.

The median time to abandonment is 13.7 months, according to Pertamina Partners. That is over a year of engineering hours invested in building a feature that your data cannot support. The 46% proof-of-concept scrap rate that Agility at Scale documents happens for one consistent reason.

Your product team starts building a churn prediction model in month one. By month four, the data scientist realizes that 30% of user accounts have null values in the "last login date" field. The model trains on incomplete signals. The predictions are unreliable. The feature gets shelved. Four months of work, gone. This pattern repeats across recommendation engines, propensity scores, and automated workflows.

"The readiness audit is not a gate. It is a map. It tells you where your data is strong enough to support AI and where it needs work first."

— Jake McMahon, ProductQuant

The teams that ship AI features in 10 to 14 weeks do not have better models. They have better data. And they know exactly what they have before they start building. Read our analysis of why most AI features fail before the model layer to understand the full failure pattern.

What "AI-Ready" Actually Means

Most teams believe they are AI-ready because they have a data warehouse, an analytics tool, and a business intelligence dashboard. You might be running Snowflake or BigQuery, tracking events in PostHog or Mixpanel, and building reports in Looker or Tableau. That feels like a complete data stack. It is not AI-ready.

That is data-having. There is a meaningful difference between storing data and having data that can reliably support machine learning workloads. Gartner defines AI-ready data with 5 specific criteria that go well beyond having infrastructure in place.

First, data must be aligned to specific use cases. You need to know exactly which datasets a specific model requires, not whether you have data in general. Second, data must be actively governed at the asset level. Someone owns the quality of each dataset by name, not the data team as a vague collective responsibility.

Third, automated pipelines must include quality gates. Data quality checks run on every ingestion cycle, not during quarterly audits that nobody reads.

Fourth, live metadata management keeps your data catalog current. Static catalogs go stale in 60 to 90 days, according to SR Analytics. Fifth, continuous quality assurance tracks completeness, accuracy, consistency, and timeliness in real time, not as retrospective findings.

If your data catalog is older than 90 days, you are not making decisions based on what data you actually have.

Understanding what readiness looks like is the first step. Running the audit against your actual infrastructure is the second. If you want to start with a broader data assessment first, our AI data readiness audit covers the foundational layer that this ML audit builds on top of.

The ML Readiness Audit: 5 Dimensions

The audit scores 5 dimensions of your data and infrastructure stack. Each dimension gets a score from 1 to 5. Level 3 is the minimum threshold for reliably transitioning AI features from pilot to production. Below Level 3, you are building on unstable ground and the timeline will extend accordingly.

Score Your ML Readiness in 10 Minutes

Take the quick self-assessment to see where your team stands across all 5 dimensions before committing to a build timeline.

Dimension 1: Data Quality

The most fundamental question is not how much data you have. It is whether that data is correct. A model trained on inaccurate data will produce inaccurate predictions, and no amount of engineering sophistication can compensate for garbage input.

Completeness measures what percentage of required fields are populated. If your churn model needs "last login date" and 30% of users have null values, your model will be biased toward the users who do log in regularly. Accuracy checks whether the data matches reality. If your event tracking fires twice for the same user action, your model trains on inflated signals that distort every downstream prediction.

Consistency matters when the same event carries different names across web, mobile, and API layers. Inconsistent event naming is the number one data quality issue in product analytics. Timeliness measures the gap between when an event happens and when it is queryable by a model. For real-time inference, that gap needs to be measured in seconds, not hours.

The scoring rubric for Data Quality runs from ad hoc to self-improving. At Level 1, no quality metrics are tracked and data issues are discovered by users filing support tickets. At Level 2, some metrics exist but quality issues are addressed only after they cause visible problems. At Level 3, quality is tracked per dataset with automated alerts for critical drops, and this is the minimum for AI production. At Level 4, quality thresholds are defined per use case and data quality is part of the development workflow. At Level 5, automated quality repair runs continuously and pipelines self-heal based on anomaly detection.

The insight: Data quality is not about volume — it is about completeness, accuracy, consistency, and timeliness measured per dataset, not as a team-wide abstraction.

Dimension 2: Data Governance

The governance question is not who manages the database. It is who is formally accountable when the data is wrong. Without named accountability, data quality issues fall through the gaps between teams and nobody takes ownership of the fix.

Named data owners are the first requirement. If the answer to "who owns this dataset" is "the data team," you do not have governance. You have diffusion of responsibility. Documented policies establish rules for data creation, modification, access, and retirement. Active data stewards manage domain quality proactively rather than reacting to bugs after they surface.

Formal escalation paths resolve disputes when data quality conflicts arise between teams. AI-specific governance adds bias monitoring, output auditability, and model drift tracking to the traditional governance stack. These are not part of standard data governance frameworks and they need deliberate implementation.

The scoring for Data Governance follows the same 1-to-5 scale. Level 1 has no formal ownership and the assumption that everyone owns the data means nobody does. Level 2 has some owners named but policies exist without enforcement. Level 3 establishes formal ownership with active stewards and documented escalation paths, which is the minimum for AI production. Level 4 embeds governance in the development workflow with AI-specific policies. Level 5 automates governance and catches policy violations before deployment.

The insight: Governance is not about managing databases — it is about naming one person who is accountable when the data is wrong.

Dimension 3: Data Architecture

This is where many teams discover that their data warehouse was built for reporting, not inference. Reporting queries are batch-oriented and tolerate latency. Inference workloads can require real-time access and very different architectural patterns.

Storage scale determines whether your infrastructure handles the volume and velocity of AI workloads. Pipeline latency measures how long an event takes to go from "user did it" to "queryable by a model." For batch inference, hours are acceptable. For real-time features, it needs to be seconds. Your data catalog must be maintained and searchable. A data scientist should find the features they need in under 30 minutes.

Batch and real-time support determines whether your architecture handles both modes. Most AI features start with batch processing and need real-time capability within 6 months of launch. Documentation matters too. If your data architecture lives in one engineer's head, you have a single point of failure that compounds every time someone leaves.

Data Architecture scoring: Level 1 has no data catalog, undocumented architecture, and batch-only pipelines. Level 2 has a basic catalog and some documentation with batch pipelines that need manual fixes. Level 3 has a maintained catalog, documented architecture, and batch plus streaming support, meeting the minimum for AI production. Level 4 adds self-service data discovery and automated pipeline monitoring. Level 5 achieves FAIR data standards with automated schema evolution detection.

The insight: Your data warehouse was likely built for reporting, not inference — batch queries tolerate latency that real-time AI features cannot.

Dimension 4: Infrastructure

Having a model running in a notebook is different from running a model in production at scale. This dimension assesses whether your deployment layer can support AI features once they are built and tested.

Compute environment readiness covers your cloud infrastructure on AWS, GCP, or Azure. You need to provision GPU and CPU resources for both model training and inference workloads. MLOps capabilities include CI/CD for models, versioning, monitoring, and rollback. You should deploy a new model version without downtime and roll back if predictions degrade.

A sandbox environment gives your team an isolated space for experimentation that cannot break production systems. Security alignment ensures your infrastructure meets compliance requirements for AI data processing, including GDPR and HIPAA where applicable. API integration determines whether models serve predictions through interfaces that your product can actually consume.

Infrastructure scoring: Level 1 has no MLOps, models run in notebooks, and no sandbox exists. Level 2 has some CI/CD, manual deployment processes, and a basic sandbox. Level 3 has MLOps in place with automated deployment and an isolated sandbox, meeting the minimum for AI production. Level 4 adds automated model monitoring and A/B testing for models in production. Level 5 features automated retraining pipelines where model performance triggers retraining without manual intervention.

The insight: A model in a notebook is not production — MLOps, sandbox isolation, and rollback capability separate experiments from shipped features.

Dimension 5: Data Culture

This is the softest dimension and the hardest to fix. If your team does not make data-driven decisions today, an AI feature will not change that dynamic. Culture shifts happen slowly and they require consistent reinforcement from leadership.

Data literacy measures whether non-technical team members can read and interpret data outputs. If they cannot, they will not trust the AI output regardless of its accuracy. Executive sponsorship requires visible budget and leadership commitment for data initiatives. AI cannot be a pet project that gets defunded when the next shiny object appears.

Data-driven decision norms separate teams that back decisions with data from teams that back decisions with the highest-paid person's opinion. Culture determines whether AI output gets trusted or ignored, regardless of its accuracy. Cross-functional collaboration ensures product, engineering, and data science teams work from the same data rather than maintaining separate versions of the truth.

Data Culture scoring: Level 1 has siloed data, intuition-driven decisions, and no executive sponsorship. Level 2 has some data literacy training and decisions occasionally backed by data. Level 3 has documented executive sponsorship and data-driven norms emerging, which is the minimum for AI production. Level 4 makes data literacy a hiring and development requirement with standard cross-functional collaboration. Level 5 builds a Center of Excellence that drives continuous optimization and makes data culture a competitive advantage.

The insight: Culture is the hardest dimension to fix and the easiest to ignore — if decisions today run on opinion, AI output will too.

Each dimension feeds into the others. Poor Data Quality makes governance meaningless because there is nothing reliable to govern. Weak Architecture undermines Infrastructure because the pipes cannot carry what the models need. And a shallow Data Culture means all four technical dimensions can be strong and the features still get ignored by the organization.

What Your Score Means

Once you have scored all 5 dimensions, the interpretation falls into 3 clear tiers. Each tier maps to a different timeline, risk profile, and action plan. There is no ambiguity about what to do next once you know where you stand.

All Dimensions at Level 3 or Above

Your AI features can move from pilot to production reliably. You should expect timelines in the 10 to 14 week range per SR Analytics. Start with your highest-impact, lowest-risk feature and build from there. Your data foundation will support the workload and your team has the governance to maintain it.

The insight: Level 3 across all dimensions means you ship — start with the highest-impact, lowest-risk feature and build momentum from there.

Any Dimension at Level 1 or 2

You have a remediation gap that needs attention before committing to a build timeline. This does not mean abandoning AI. It means addressing the gap first and adjusting your timeline accordingly. Each dimension below Level 3 adds 2 to 6 months to your timeline. Teams starting from an unprepared foundation take 6 to 18 months to reach production according to SR Analytics.

The priority order for remediation matters. Data Quality comes first because nothing else functions if the underlying data is wrong. Data Governance follows second because without ownership, quality degrades regardless of your initial improvements. Data Architecture is third because you need the pipes before you can run the models through them. Infrastructure is fourth because deploy capabilities can be built in parallel with model development. Data Culture is last because culture changes slowly and will improve as you deliver visible value from the other four dimensions.

The insight: Every dimension below Level 3 adds 2 to 6 months — remediate in priority order: Quality, Governance, Architecture, Infrastructure, Culture.

All Dimensions at Level 1

You are not ready for AI yet and this is not a failure. It is a diagnosis. You need to build the foundation first: event tracking, data governance, and a maintained data catalog. This takes 3 to 6 months and will make every future AI project faster and more accurate. The AI feature prioritization framework can help you decide which foundational capabilities to invest in first.

The audit surfaces gaps before the build starts, which is exactly when you want to find them. Discovering a Level 1 Data Quality score on day one saves you the 13.7 months of wasted effort that the median abandoned project burns through.

The insight: Scoring Level 1 everywhere is not a failure — it is a diagnosis that saves you 13.7 months of wasted build effort by surfacing the gap before you commit.

AI Feature Launch — $4,997 in 2 Weeks

Get a complete AI feature strategy including the Data Readiness Audit template, scoring rubric, and a prioritized build plan for your product team.

The Remediation Timeline

Most teams expect a readiness audit to take weeks of assessment work. Scoring all 5 dimensions actually takes days. The remediation, fixing what the audit reveals, is what takes weeks. Understanding this distinction keeps your expectations aligned with reality.

A realistic remediation plan for a team scoring at Level 2 across all dimensions follows a 12-week structure. Each phase builds on the previous one and the deliverables are concrete enough to verify completion objectively.

| Week | Focus | Deliverable |

|---|---|---|

| 1 to 2 | Data quality audit | Quality metrics dashboard with top 10 data issues prioritized by impact |

| 3 to 4 | Data ownership | Named owners per dataset with governance policies drafted and reviewed |

| 5 to 6 | Event tracking audit | Tracking plan documented and critical events instrumented across all surfaces |

| 7 to 8 | Data catalog | Catalog populated, searchable, and tagged with freshness indicators per dataset |

| 9 to 10 | Infrastructure assessment | MLOps requirements defined and sandbox environment provisioned |

| 11 to 12 | Remediation complete | Re-audit confirms all dimensions at Level 3 or above with evidence logged |

12 weeks to go from Level 2 to Level 3 across all dimensions. After that, your first AI feature goes from an 18-month gamble to a 10 to 14 week delivery. The difference between those two timelines is the readiness audit and the disciplined remediation work that follows it.

The key insight is that remediation is sequential. You cannot skip Data Quality and jump straight to Infrastructure. The pipes do not matter if what flows through them is unreliable. Full readiness assessments reduce project failure rates by 40% and accelerate time-to-value by 50%, according to Omisoft. That return on assessment effort is what makes the audit worth running before every AI commitment.

Generative AI vs. Predictive AI: Different Readiness Profiles

Most readiness checklists treat all AI features as if they have the same data requirements. They do not. Generative AI and predictive AI have meaningfully different infrastructure, data, and governance needs. A team might score Level 4 for one type and Level 2 for the other.

Predictive AI Readiness

Predictive AI covers churn models, propensity scores, and recommendation engines. These models need structured, labeled data with clear outcome variables. You need minimum 6 months of historical volume to establish meaningful patterns. Feature engineering pipelines transform raw events into model inputs and those pipelines need to be tested and reliable.

The Data Quality dimension matters most for predictive AI. If your behavioral event tracking is inconsistent, your model will fit to noise rather than signal. The Data Architecture dimension matters too because feature stores and batch processing pipelines are core to predictive workloads.

The insight: Predictive AI lives or dies on structured, labeled data — inconsistent event tracking means the model fits noise, not signal.

Generative AI Readiness

Generative AI covers summarization, question-answering, and content generation features. These models need an unstructured text corpus, not labeled training data. Retrieval-augmented generation infrastructure replaces traditional feature engineering. Prompt engineering and guardrails matter more than labeled training data.

Generative AI requires less labeled data but more corpus quality. Your documentation needs to be accurate, current, and well-organized. Your support ticket history needs consistent categorization. The Data Governance dimension matters more here because the model will surface content from your corpus and you need to control what it has access to.

The insight: Generative AI shifts the risk from data labeling to corpus quality — your documentation and support history must be accurate and organized.

Scoring for Each Type

Run the audit separately for generative and predictive use cases. A team can be Level 4 for generative AI and Level 2 for predictive AI, and that gap dictates your build sequence. Knowing which type you are assessing changes the remediation priority entirely.

This distinction also changes your build sequencing. You might ship a generative AI summarization feature in 10 to 14 weeks while simultaneously remediating your Data Quality score to enable a predictive churn model 6 months later. Both are valid paths. Running the audit tells you which path is open right now and which one needs groundwork first.

The insight: Run the audit separately for generative and predictive use cases — a team can be Level 4 for one and Level 2 for the other, and that gap dictates your build sequence.

FAQ

How long does the ML readiness audit take?

Scoring all 5 dimensions takes 2 to 3 days of focused assessment. You walk through each dimension, gather evidence, and assign scores. The remediation, fixing what the audit reveals, takes 4 to 12 weeks depending on your starting level across all dimensions.

The insight: The scoring takes days. The remediation takes weeks. Do not confuse assessment time with fix time.

Do you need a data scientist to run this audit?

No. The audit assesses infrastructure, governance, and culture — not model architecture or algorithm selection. A product leader or analytics engineer can run it with input from your data, engineering, and product teams. You need people who know where the data lives and how decisions get made, not people who know how to tune hyperparameters.

The insight: The audit evaluates your infrastructure and governance, not your model architecture — a product leader who knows where the data lives can run it.

What happens if you score below Level 3?

You remediate the gaps before committing to a build timeline. Level 3 is the minimum for reliable AI production, but scoring below it does not make AI impossible. It means your timeline is longer and your risk is higher if you build anyway. Full readiness assessments reduce project failure rates by 40% and accelerate time-to-value by 50% according to Omisoft, which is the return on running the audit even when the scores are uncomfortable.

The insight: Below Level 3 does not mean "no AI" — it means longer timelines, higher risk, and a 40% failure-rate reduction if you assess anyway.

Should you audit before or after choosing which AI feature to build?

Before, always. The audit tells you which features are buildable with your current data foundation. If your behavioral event tracking scores at Level 1, a churn prediction model is not viable yet. But a summarization feature built on your clean documentation corpus might be perfectly buildable today. The audit sorts buildable from not-yet-buildable so you can sequence your roadmap accordingly.

The insight: Audit before you choose — the score tells you which features are buildable today and which need groundwork first.

How often should you re-run the audit?

Every 4 to 6 weeks during active remediation, then quarterly once you reach Level 3 or above across all dimensions. Static data catalogs go stale in 60 to 90 days, so re-assessment keeps you honest about what your foundation actually looks like today.

Running the audit on a schedule turns it from a one-time checkpoint into an ongoing measurement system. You will spot regressions early and catch data drift before it impacts the models you have already shipped.

The insight: Re-run on a schedule — static data catalogs go stale in 60 to 90 days, and quarterly reassessment catches regression before it hits your models.

Sources

- Gartner: Lack of AI-Ready Data Puts AI Projects at Risk — 2026 prediction on AI project abandonment rates

- Agility at Scale: Data Readiness Assessment for AI — Research on the 70% pilot-to-production failure rate

- Pertamina Partners: AI Project Failure Statistics 2026 — Median time to abandonment of 13.7 months

- SR Analytics: Why 95% of AI Projects Fail — Timeline data on AI-ready vs. unprepared teams

- Omisoft: AI Readiness Assessment Guide — Failure rate reduction and time-to-value acceleration data

All statistics and claims in this article are sourced from the organizations listed above. Scores and remediation timelines are based on patterns observed across B2B SaaS companies building production AI features.

Run the Audit Before You Build

The readiness audit takes 2 to 3 days and tells you which AI features are buildable today. Skip it and you risk 13.7 months of work on features your data cannot support.