TL;DR

- A strong analytics implementation starts before any SDK work: clarify business questions, define the object model, and write the tracking plan first.

- The checklist should cover pre-implementation review, instrumentation QA, post-launch validation, dashboard readiness, and governance.

- In B2B SaaS, account-level grouping and segmented reporting are part of the implementation, not an optional enhancement.

- If nobody owns naming, QA, and change control after launch, the analytics layer will degrade quickly even if the initial build was solid.

Analytics implementations usually get described as a tooling task: install the SDK, fire a few events, make some dashboards. That framing is the root of the problem.

A usable analytics system is not just code in production. It is a measurement model the team can trust. That requires a checklist that starts before engineering and keeps going after launch.

That is why the checklist has to include strategy, structure, QA, and governance. Otherwise the team gets an event stream, not a system.

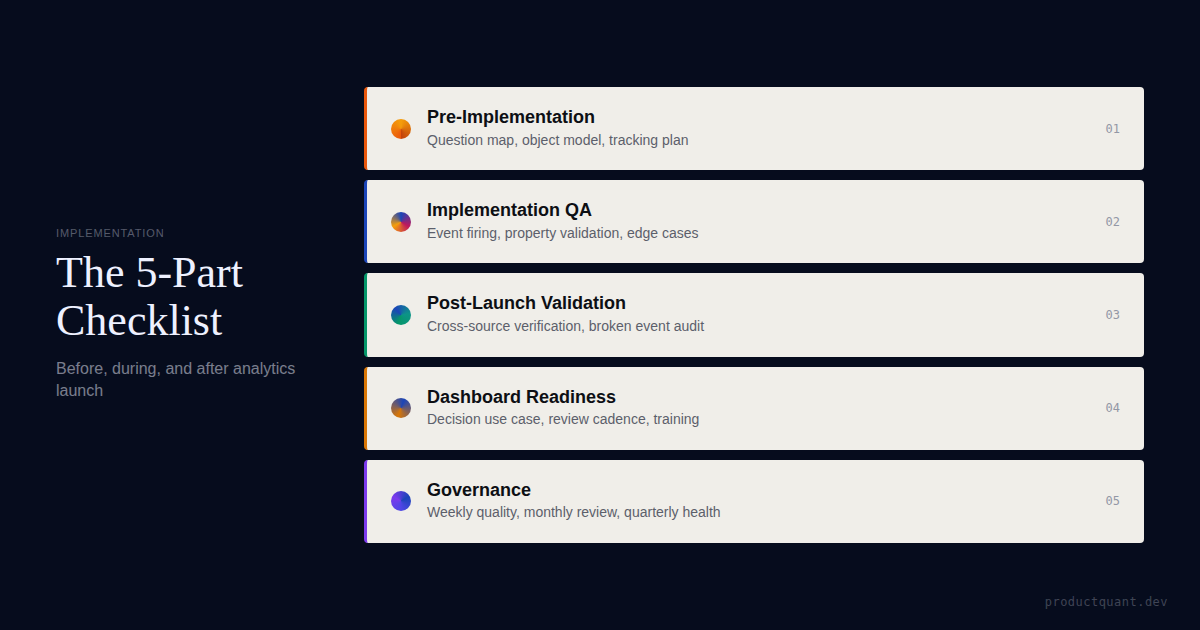

The 5-Part Implementation Checklist

| Phase | What to check | Why it matters |

|---|---|---|

| Pre-implementation | Question map, object model, event naming, property schema, tracking plan review | Prevents expensive ambiguity later |

| Implementation QA | Event firing, property types, null rates, deduplication, edge cases | Turns instrumentation into trustworthy data |

| Post-launch validation | Volume checks, funnel order, distribution sanity, dashboard load and filter tests | Catches broken production behavior quickly |

| Dashboard readiness | Ownership, review cadence, segment cuts, decision use cases | Stops dashboards from becoming unused decoration |

| Governance | Change log, event deprecation, monthly quality review, quarterly taxonomy health check | Keeps the system from decaying |

Each phase closes a different failure mode. Skip one and the system becomes harder to trust, which means it becomes easier to ignore.

1. Pre-Implementation Review

This is where most analytics debt is either prevented or created. Teams that skip this phase typically produce 2-3x more events than they need — and most of the extras are useless.

Start with the business questions

The team should know which activation, retention, feature adoption, and revenue questions the implementation must answer. If the key questions are vague, the event list will expand without becoming more useful.

At ProductQuant, we start with 10-12 questions maximum. Each question maps to 2-3 events. If a question requires more events to answer, the question is too complex and should be split.

Define the object model clearly

In B2B SaaS, user-level analytics alone is usually misleading. The checklist should confirm the person entity, account or workspace entity, core product objects, and the identifiers that connect them.

The most common gap: the team tracks what each user does but not which account they belong to. In a multi-user product, this makes retention and expansion analysis impossible. The person-to-account mapping is not an optional enhancement. It is the foundation of any B2B analytics system.

Review naming and properties before engineering work begins

Event names should be standardized, properties typed, null semantics defined, and sample payloads documented. A tracking plan that lacks these details is not implementation-ready.

What good looks like: every event has a name that describes the action (not the UI element), a list of required properties with types, the conditions under which it fires, and the conditions under which it must not fire.

Engineering should not have to infer what an event means, when it fires, or which properties are required. Those decisions belong in the tracking plan, not in production guesswork.

2. Implementation QA

After the events are wired, the next job is to prove they behave correctly.

Event firing checks

- The event fires on the happy path — if it doesn't fire when the user completes the action, nothing downstream works

- It fires exactly once — duplicate events inflate every metric and make dashboards unreliable

- It does not fire on cancel or failure states — events that fire on failed actions create phantom funnels

- It fires after the successful state change, not before — firing before the backend confirms the action means the analytics system records intentions, not outcomes

Property validation

- Required properties are present — any event that sometimes fires without its key properties will break downstream analysis

- Types are correct — a property that is sometimes a string and sometimes an integer will make segmentation impossible

- Enum values are controlled — if property values are free text instead of a controlled list, analysis becomes noisy

- Account identifiers are populated for B2B analysis — missing account IDs make it impossible to segment by company, plan, or team size

Edge-case handling

The checklist should explicitly test duplicate clicks, bulk actions, API-triggered events, refresh behavior, and any mobile or offline flows that can distort counts.

Most analytics bugs are not "the event never fired." They are quieter than that. The event fires twice. The property becomes a string instead of an integer. The account identifier drops off in one workflow. QA is what catches those failures before the dashboards normalize them.

External validation resources like PostHog's best practices guide and Amplitude event taxonomy guides confirm the same pattern: the most expensive bugs are the silent ones — events that fire with wrong data, not events that don't fire at all.

3. Post-Launch Validation

A launch is not the end of validation. Production traffic is where bad assumptions finally show up.

Volume and distribution checks

Expected volumes should be compared with reality. Zero-volume events, sudden spikes, and impossible distributions usually reveal implementation issues immediately.

What to look for: an event that fired 50 times in staging but 5,000 times in production on day one. An event that fired 0 times. A property that was always populated in testing but is null in 30% of production events.

Funnel sanity checks

If users are reaching later funnel events without earlier prerequisite events, something is wrong in the event logic or the data model. The checklist should force that review right away.

Dashboard validation

The dashboards must load, filters must work, and the charts must reflect the intended population. This is where you find out whether the implementation supports segmentation by plan, role, account type, or acquisition source the way the team expected.

As event taxonomy guides for growing teams note, the taxonomy that works for 1,000 users rarely works for 10,000 without review. Post-launch validation is where you catch the scaling gaps.

Get the implementation checklist sheet

This CSV is structured by phase so product, analytics, and engineering can run the same implementation review without improvising the process every time.

4. Dashboard Readiness and Handoff

Implementations fail when dashboards are treated as the final deliverable instead of the first operational layer.

Each dashboard needs a decision use case

The checklist should record who uses the dashboard, how often, which segments matter, and what decision it should support. A dashboard without an owner is just a report with no future.

Review cadence matters

Activation, retention, adoption, and revenue dashboards should fit into existing review rituals. If the implementation creates a reporting surface with no meeting, no owner, and no decision path, the data will not compound.

Training is part of implementation

The handoff is incomplete if PMs, analysts, and leaders do not know how to interpret the new dashboards or request additions to the taxonomy safely.

The right training covers three things: how to build a query, how to read the result, and when to escalate a data quality issue. Most teams train only the first one.

5. Governance After Launch

The implementation checklist should end with maintenance, not stop at launch. Without governance, even a perfect implementation decays within months.

Weekly quality review

Check event volume anomalies, null rates, new unknown events, and whether the critical dashboards still reflect the live product correctly.

The signals that matter: an event that was firing 10,000 times per week and suddenly drops to 500. A property that went from 0% null to 25% null. 50 new unknown event names appearing after a sprint. None of these break the system immediately. All of them corrode it.

Monthly governance review

Review unused events, deprecated logic, dashboard sprawl, and naming drift. The question is not only "is the data working?" but also "is the model still coherent?"

What typically happens without governance: a team starts with 47 events and ends up with 200+ 6 months later. Most of the new events were added ad hoc during sprints. Nobody documented them. Nobody knows what they mean. The taxonomy that was once a trusted system becomes a guessing game.

Quarterly taxonomy health check

Any major change in activation path, pricing, product packaging, or account structure should trigger a re-check of the taxonomy. Otherwise the analytics layer starts describing an old product.

Resources like Amplitude tracking plan best practices confirm the same pattern: the teams that maintain governance see compounding value from their analytics. The teams that don't see it degrade to the point where they rebuild.

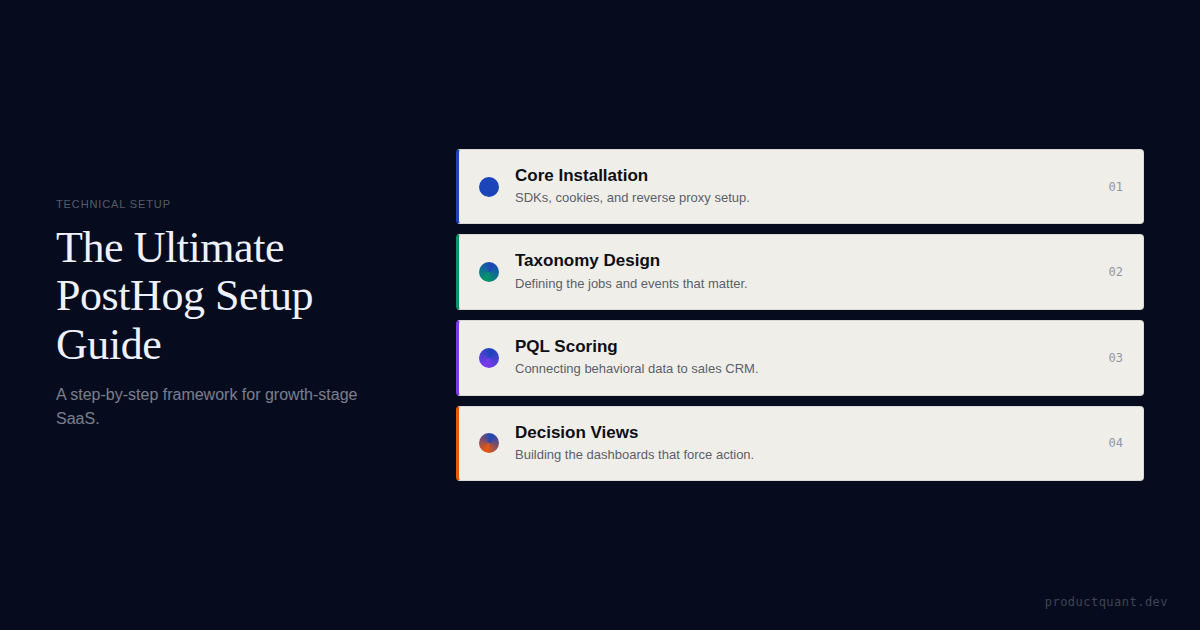

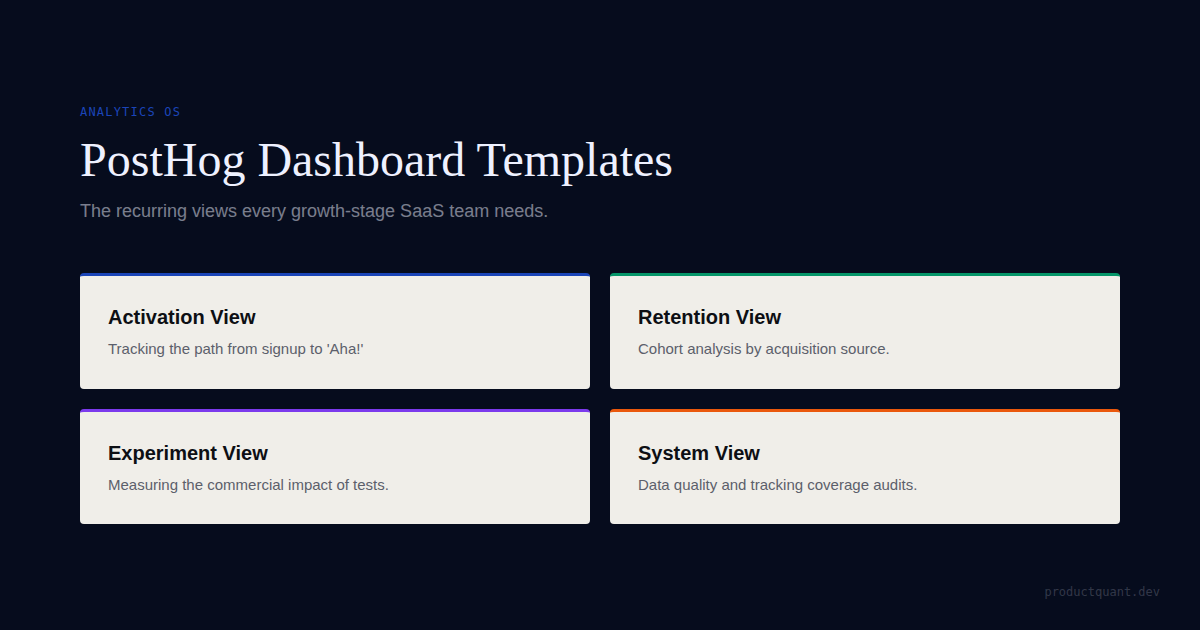

Checklist, setup, and dashboard design are three different jobs

This checklist article covers the implementation lifecycle. The setup guide covers tool configuration. The template article covers the first dashboard set worth building.

FAQ

Is this checklist only for PostHog?

No. The checklist is tool-agnostic. The sequence applies whether the team uses PostHog, Amplitude, Mixpanel, or another stack. The questions are the same: does the event fire, does it fire once, does it fire correctly, and does someone own it after launch? The tool only determines how you check the answers.

What is the most skipped step?

Usually pre-implementation review or post-launch validation. Teams either move too fast into coding or assume that once events appear in the tool, the work is done. Both skips are expensive. Pre-implementation skip means the team builds the wrong events. Post-launch skip means they never find out.

Why is governance part of implementation?

Because without governance, a good implementation lasts only until the next meaningful product change. Governance is what keeps the implementation useful over time. A team that ships one product update per sprint without a taxonomy review will have an unusable analytics layer within two quarters.

What if the current analytics setup is already messy?

Then the checklist still helps. It becomes a remediation plan instead of a first-time rollout plan: identify the gaps, re-document the taxonomy, validate the current events, and retire what should not survive. The same five phases apply — you are just starting further behind.

How long does a proper implementation take?

For a typical B2B SaaS product with 40-60 events across 3-5 core workflows: 1-2 weeks for the tracking plan, 1-2 weeks for wiring and QA, and 1 week for post-launch validation. Dashboard work runs in parallel. Governance starts immediately after launch.

Sources

If the implementation is not trusted, the dashboards will not matter.

The point of the checklist is to make analytics trustworthy enough that product, growth, and leadership actually use it to settle decisions.