TL;DR

- A paid channel test is not a winner-selection ritual. It is a learning system for deciding what deserves another round of spend.

- Equal-budget windows make channels comparable early. You are not looking for perfect certainty. You are looking for which channels clear the next threshold and which ones should be killed.

- Cheap clicks are not the same thing as scalable demand. In one SaaS experiment set, low-cost traffic still needed replay review, device cuts, and signup-quality checks before it meant anything.

- Setup friction is part of channel economics. If one platform requires extra verification, extra waiting, or more technical setup before you can test properly, that should affect the decision.

Most early-stage SaaS teams say they are "testing channels," but what they are often doing is buying visibility and then rewarding whichever dashboard looks busiest.

That sounds disciplined on paper. In practice, it usually means one of three things:

- the team scales the platform with the most impressions

- the team celebrates the lowest CPC without checking traffic quality

- the team gets discouraged because nothing looks clean fast enough

All three are avoidable if the experiment is structured properly. The useful question is not "which platform gave us more activity?" It is "which platform produced the kind of visitor or signup we would actually want more of?"

That distinction matters because different platforms surface different types of false positives. Quora can look promising if reach is large but keyword intent is loose. Reddit can look promising if clicks are cheap but the community fit is wrong. Meta can tempt you into creative volume before the offer is sharp enough. X can impose enough setup friction that the economics are worse before the first ad even runs.

What Is the Right Testing Method?

The clean version is simple. Pick one offer, define one threshold, and run channels through a comparable window.

- Start with one promise. Do not test four channels with four different offers and then pretend you learned something about the platforms.

- Use similar test windows and spend envelopes. Equality does not need to be perfect, but it should be close enough that the channels are comparable.

- Define the decision metric before launch. If possible, use qualified leads. If not, use the best validated proxy you can actually trust.

- Inspect behavior after the click. Replays, session quality, signup completion, and device cuts matter more than top-line click volume.

- Kill channels that miss the threshold. The experiment only works if you are willing to stop buying attractive noise.

In one archived SaaS demand-gen body of work, the paid acquisition layer alone contained 22 campaign files across Quora, Reddit, Meta, and X. The useful lesson was not that every platform worked. The useful lesson was that each platform demanded a different validation logic before it deserved scale.

The original brief behind this spoke used "cost per qualified lead" as the core yardstick. That is directionally right. But the stronger operational rule is this: use the best quality-adjusted metric your current instrumentation can support honestly. If your system only supports confirmed signups plus replay-level quality review, use that instead of pretending you have deeper attribution than you do.

What Did the Cross-Channel Work Actually Show?

The case-specific numbers below came from an investing SaaS platform that tested multiple acquisition surfaces and tracked them closely enough to compare not just traffic cost, but traffic quality.

| Channel | What the source set showed | What teams usually misread |

|---|---|---|

| Quora | High-traffic interest campaigns were turned off after producing impressions without signup conversion. A later six-day window spent $156 and produced 4 confirmed signups, or roughly $39 per signup. The best campaign cut reached $8.3 cost per signup. | Teams often confuse audience size with buying intent. Behavioral and keyword precision mattered more than broad reach. |

| Campaign docs showed $0.12-$0.30 CPC, campaign-level CTR around 0.27%-0.30%, and the best ad-set CTR at 0.38%. Strategy docs recommended a $500/week test structure with 80% awareness and 20% remarketing. | Cheap clicks and respectable CTR can still hide weak conversion or low-fit subreddit traffic. Engagement is not the same thing as demand quality. | |

| Meta | The hands-down winner. Meta users signed up at a much higher rate, had longer session duration, and visited more pages than traffic from any other channel. Session recordings confirmed the quality difference visually — Meta leads behaved like engaged prospects from the first click. | Meta looks scalable, which tempts teams to scale before validating. But once the quality signal was confirmed through session recordings, consolidation made sense. |

| X | Showed similar performance to Reddit — comparable click costs, comparable signup rates, comparable session quality. X also carried a $200/month organizational verification prerequisite before serious testing could begin. | Teams compare ad spend without including platform-entry cost. X and Reddit were good enough to keep running, but not strong enough to justify splitting a small startup budget across four platforms. |

This is why "which channel worked?" is too blunt a question. The better questions are:

- which channel produced quality at a cost we can tolerate?

- which channel required too much setup overhead for the learning it produced?

- which channel looked good on top-line metrics but weakened under quality review?

The strategic point is not that Quora or Reddit is always better. The strategic point is that different channels fail in different ways, and the experiment should be designed to catch that early.

The Actual Outcome: What We Did With the Results

Meta was the hands-down winner. We could see it immediately with signups — but the confirmation came from watching session recordings of leads from each platform side by side. Meta users signed up at a much higher rate, had longer session duration, and visited more pages per session than traffic from any other channel.

X showed similar performance to Reddit — comparable click costs, comparable signup rates, comparable session quality. We could have kept both running, but for a young early-stage startup it made sense to consolidate all our Quora, Reddit, and X spend into Meta where the signal was strongest.

The reallocation decision was not based on which platform we preferred. It was based on which platform produced validated signups at a cost we could scale.

That does not mean the other platforms became useless — they just stopped being paid acquisition channels:

- Reddit became a venue for SEO and AI GEO — organic community participation, not paid ads.

- Quora became the same: answer-based organic content for search and AI visibility, not paid spend.

- X became the main social media platform for publishing organic content — thought leadership, thread distribution, and brand building, not paid acquisition.

The experiment did not tell us which platforms to use. It told us which platforms to pay for. The distinction matters because the platforms that fail as paid channels can still be valuable as organic surfaces — just not with ad spend behind them.

Why Traffic Validation Matters More Than Click Volume

The strongest insight in the source set was not a platform ranking. It was the validation habit that sat behind the ranking.

One spreadsheet tied campaign cuts to PostHog replay links and downstream behavior. Another device breakdown concluded that mobile and Android were the strongest-performing cut in that experiment snapshot. That matters because a top-line campaign result can hide very different traffic quality by device, country, or targeting layer.

If you only look at the ad dashboard, you are still upstream from the real answer. The real answer starts after the click:

- Did the visitor reach the page you intended?

- Did they interact with pricing, signup, or product surfaces?

- Did the signup complete cleanly?

- Did low-intent or broken sessions distort the channel's apparent performance?

The easiest error in channel testing is scaling a dashboard story before validating a user story. A click can be cheap, a CTR can be decent, and the traffic can still be strategically useless.

This is also why cost per qualified lead is ideal only when your qualification layer is real. If not, the better move is to say: "We are currently using confirmed signups plus replay-validated engagement as our decision metric until the qualification layer is cleaner." That is operational honesty. It is far better than pretending impressions or CTR tell you enough.

If your team is still comparing channels inside ad dashboards alone, the experiment system is incomplete.

ProductQuant helps SaaS teams define quality thresholds, validation logic, and the instrumentation layer needed to decide what deserves scale.

What Should You Do Instead?

Run the next round with a stricter checklist.

- Lock the offer first. If the message keeps changing, you are testing copy drift, not channels.

- Give channels comparable windows. The point is fast learning, not false precision.

- Decide the threshold before launch. Cost per qualified lead is best; replay-validated signup quality is an acceptable fallback if that is the honest state of the system.

- Count setup friction as part of cost. Verification requirements, business-account overhead, and tracking complexity are part of the experiment.

- Review traffic quality after the click. Device splits, replays, activation steps, and broken journeys all matter.

- Shut off attractive noise quickly. High impressions with low-quality behavior should lose, even if the dashboard looks busy.

- Only then double down. Scale should follow validated signal, not channel enthusiasm.

The practical output of a channel experiment should be a decision memo, not a screenshot of the ads manager. Which channels deserve another round? Which ones should be paused? What message needs sharpening before the next test? What tracking still has to be fixed before the answer is trustworthy?

That is how channel testing becomes a growth system instead of a spend habit.

FAQ

What is the biggest mistake in SaaS channel experiments?

Optimizing for impressions, clicks, or CTR before defining a quality threshold. A channel is only promising if it produces the kind of visitor, signup, or lead you would actually want more of.

Should every channel get the same budget in an early experiment?

Not forever, but early on it is useful. Comparable spend windows make channels easier to compare before you have enough evidence to justify asymmetric scaling.

What if I cannot measure qualified leads yet?

Use the best quality proxy you can support honestly, such as confirmed signups, replay-validated engagement, or a meaningful activation step. Do not pretend vanity metrics are decision metrics.

Why does setup friction matter in channel selection?

Because it changes experiment economics. If one platform imposes extra cost, extra waiting, or extra setup before the first valid test, that channel is more expensive than the ad spend alone suggests.

Sources

- Client engagement analysis: demand generation experiment setup and performance review for an investing SaaS platform (client name withheld), including Quora, Reddit, Meta, and X channel analysis from late 2024 to early 2025

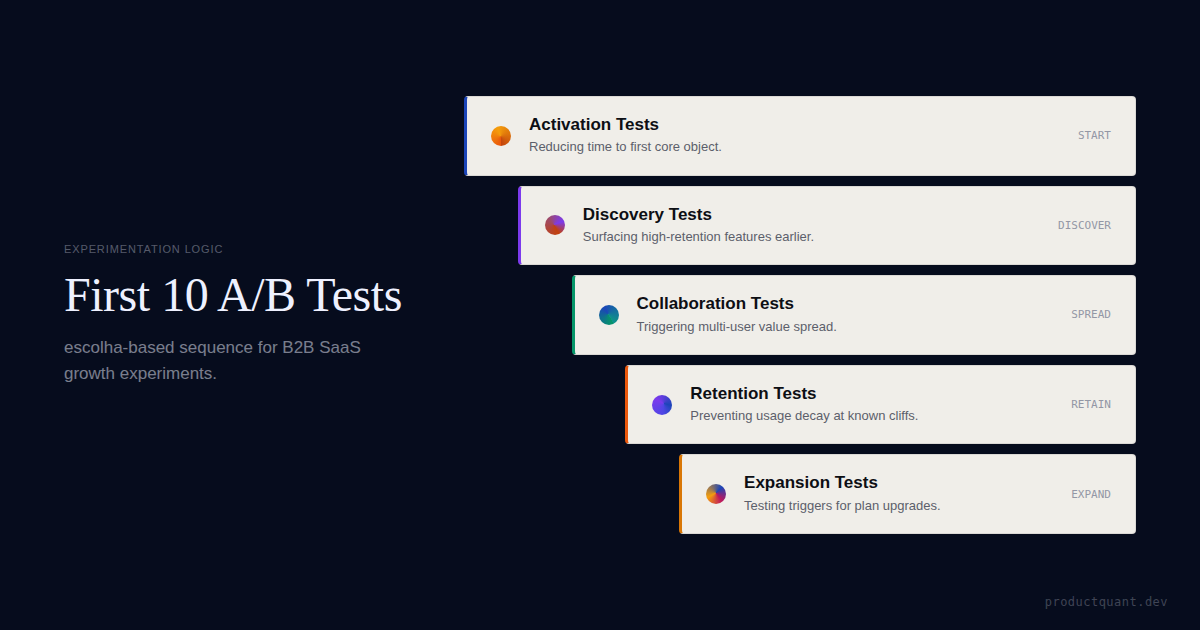

- Your First 10 A/B Tests: The Experimentation Playbook for B2B SaaS

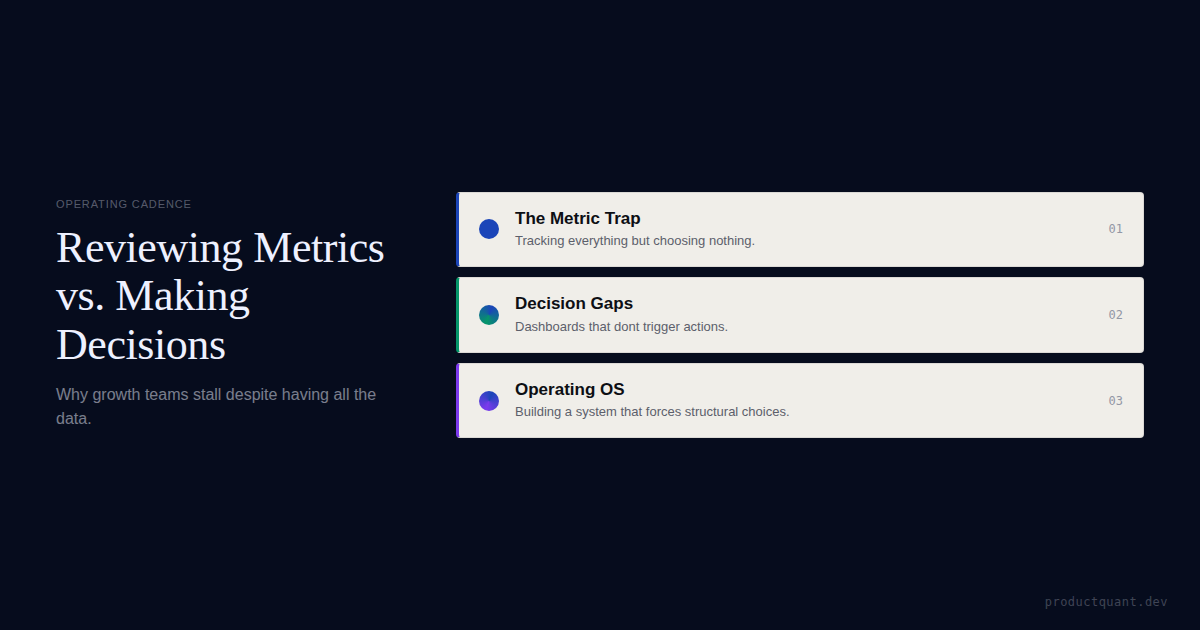

- Growth Metrics That Produce Reports, Not Decisions

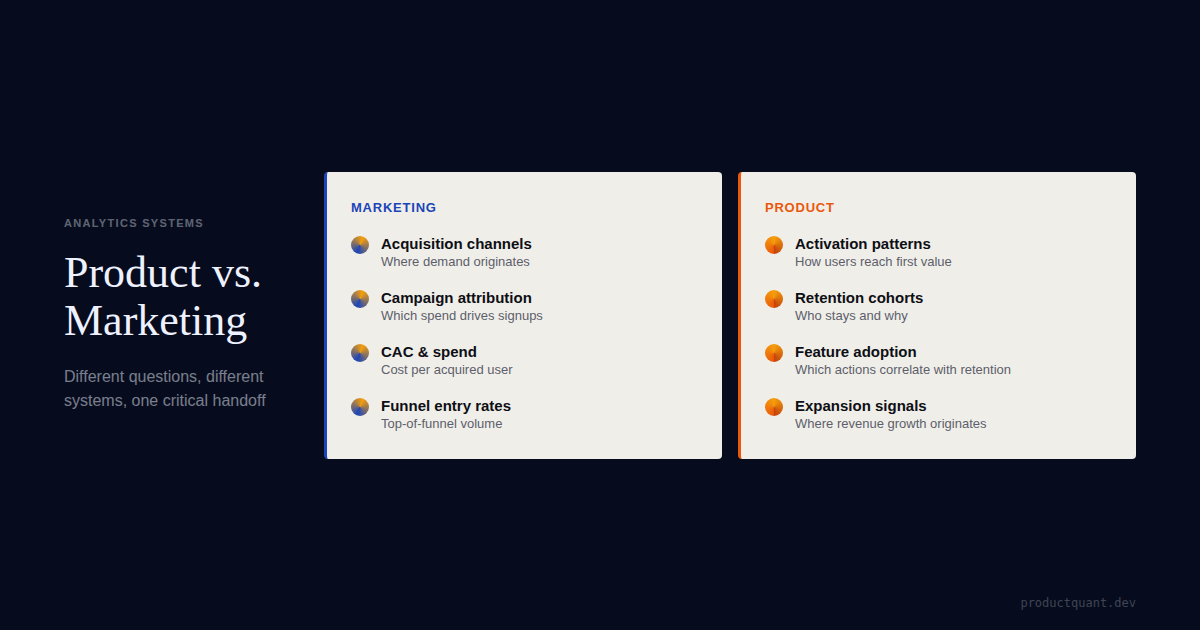

- Product Analytics vs Marketing Analytics

Free tool: Calculate the sample size your experiment needs → Sample Size Calculator

If your channel tests keep producing opinions instead of decisions, fix the experimentation system first.

ProductQuant helps SaaS teams define quality thresholds, instrumentation, and experiment logic so budget allocation follows validated signal rather than dashboard noise.